It seems as though Facebook’s attempt to help educate its users by enhancing its platform to identify posts that contain “Fake News” articles ran into a snag. It appears using a “Red Flag” or some other strong symbol may cause the reader to not believe the flagged article is fake news.

I have never been a proponent of this type of approach simply because what is or isn’t fake news is often nuanced in exposure and presentation of facts. If you have certain beliefs, you will naturally gravitate to the facts and views that support your convictions. It’s very difficult to be neutral when forming an opinion. It’s even more difficult to leave an echo chamber.

When it comes to politics …. since this effort originated after Trump was elected and the subsequent conspiracy that Russia and Trump collaborated to prevent Hillary from being elected… this is especially difficult since politicians, political pundits, and opinion writers often say things that are considered “talking point generalizations” and are often lacking factual support. This is a primary reason fact checkers have come around and that’s to help readers square up rhetoric with reality.

Note: Fact checkers hardly check themselves or other media sources they themselves deem as reputable.

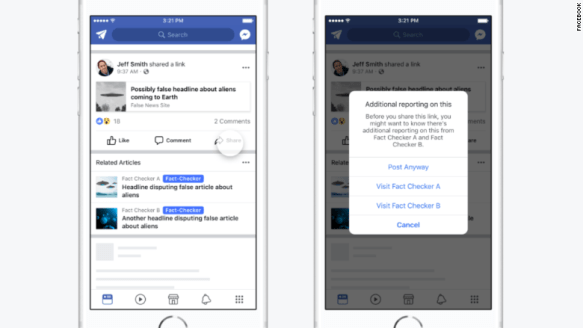

In order for Facebook to flag a post or article as “fake news”, it would be run by “reputable” fact checking sources. If it is determined that it didn’t pass some arbitrary measure as being “not fake”, the post would get a nice red flag attached to it.

Sounds great in theory until the fact checkers began editorializing the facts and inserting a biased narrative. Politifact, one of the more referenced “fact checkers” has often misconstrued statements and applied different contexts to statements made by the political right, while often ignoring glaringly obvious false talking points. They don’t even hide it anymore.

And they aren’t the only ones. Snopes, FactCheck.Org, AP, Washington Pinocchio’s Post and most major news outlets don’t even pretend to deny their bias. The fact checkers often pick which facts to check when they feel it necessary to counter a believable statement that doesn’t align to their narratives. These are the organizations that make up the “fact checkers” Facebook uses.

While Mark Zuckerberg has spent a lot of time trying to convince the public Facebook is to remain non-partisan, his employees were not so shy, even attempting to have Trump’s campaign posts removed during the 2016 campaign. After the election, Facebook came forward with information to help support the narrative of Russian interference and possible collusion.

If you read the articles from CNN, NY Times, and other sources that give numbers, it appears that upwards of 10 million individuals saw at least one ad that originated from a Russian source. While they articles talk about the extent of the meddling, the NY Times article linked shows a number of posts linked to Russian sources.

Essentially, many posts are of memes that have been floating around the internet for a while. While no geographical information has been released to show where the ads were seen, the articles reiterated the margins of victory for Trump in the blue wall states, but never point to how many voters were influenced, including any that might have switched votes.

Obviously, the point of the articles is not to provide direct evidence of the connection, but rather to imply that there is smoke to support the possibility of malfeasance. The casual reader may come away that the evidence was damning in of itself: Ads were placed by Russians and some were seen in Facebook groups controlled by Russian interests that had thousands of members. Obviously, someone was convinced by what they saw in those ads that made them change their mind and possibly affected he outcome of the election.

In other words, there are a lot of facts presented without obvious connections, and are intended to imply that the election was not legitimate. Isn’t that, by its own definition, fake news?

Pretty sure this effort will not be any more successful considering that Facebook is facing a new set of problems.

Facebook is a shill along with many other prominent social media platforms. They long since sold their neutrality and free speech to the highest bidder along with privacy.

Whether its an asset or a data server if you can’t hold it you dont own it.

Fuck Facebook.

LikeLiked by 2 people

Facebook can be bad for you:

https://www.cnbc.com/2017/12/15/facebook-just-admitted-that-using-facebook-can-be-bad-for-you.html

Also, there was a recent statistic that since 2012, half of the briefs for couples filing for divorce contained the word “Facebook.”

Zuckerberg made a lot of money on people’s drama.

LikeLike

I try to stay out of Politics on Facebook…which can be difficult because some of my friends/family like to espouse their political views…on BOTH sides of the political spectrum.

Facebook is just too handy for keeping track of long (thought) lost friends and family, in my opinion, to not use at all.

LikeLiked by 1 person

I use it more to keep in touch and discuss non-political things. Like you, I have friends on both sides. I usually just scroll past the political posts.

LikeLiked by 1 person